|

It is sufficient to solve for s ∈ R, x ∈ R, and y ∈ R that satisfy the following equations:

One approach is to write x and y in terms of s and then solve for s using the second equation.

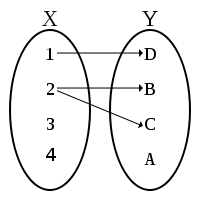

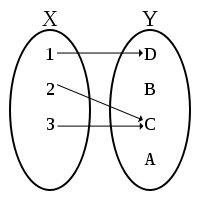

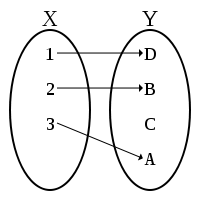

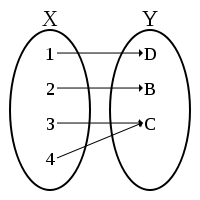

Example: Solve the following three problems.

-

Solve the following equation for x ∈ R:

-

Solve the following equation for x ∈ R and y ∈ R:

| |

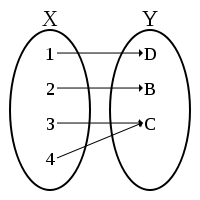

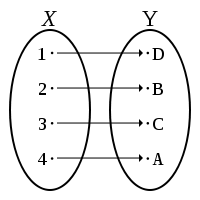

| | and | | are linearly dependent

|

|

-

Determine if the following two vectors are linearly dependent or linearly independent:

In the case of vectors in R2, the properties of linear dependence and orthogonality can be defined in terms of

the slopes of the vectors involved. However, note that these definitions in terms of slope are a special case for R2.

The more general definitions (in terms of scalar multiplication and dot product, respectively) apply to any vectors in a vector space.

Thus, we can derive the slope definitions from the more general definitions.

Fact: If two vectors [ x; y] ∈ R2 and [ x'; y'] ∈ R2 are linearly dependent, then x/ y = x'/ y'.

If the two vectors a linearly dependent, then there exists a scalar s ∈ R such that:

Fact: If two vectors [ x; y] ∈ R2 and [ x'; y'] ∈ R2 are orthogonal, then x/ y = - y'/ x':

Example: List all unit vectors orthogonal to:

| |

It is sufficient to solve for x ∈ R and y ∈ R that satisfy the following equations:

We can solve the above for the two vectors by first solving for both possible values of

x, then finding the corresponding values for y:

Thus, the two vectors are:

Orthogonal projections of vectors have a variety of interpretations and applications; one simple interpretation of the projection of

a vector v onto a vector w is the shadow of vector v on w with respect to a light source that is orthogonal to w.

Example: Consider the vector following vectors, where u, v ∈ R2 and u is a unit vector:

What is the orthogonal projection of v onto u? It is simply the x component of v, which is x. Thus:

| | | is the projection of v onto u

|

|

Notice that we can obtain the length of the orthogonal projection using the dot product:

Since u is a unit vector, we can then simply multiply u by the scalar x to obtain the actual projection:

The above example can be generalized to an arbitrary vector v and unit vector u; then, it can be generalized to any vector u by using

the fact that u/||u|| is always a unit vector.

Example: Compute the orthogonal projection of v onto u where:

We can apply the formula:

| = | | | = | | | = | | | = | | |

(v ⋅ (u/||u||)) ⋅ (u/||u||) | |

| = | | | = | | | = | | | = | | | | = | |

Other tools available online

can be used to perform and check computations such as the above.

Example: Solve the problems below.

-

Compute the orthogonal projection of v onto u where u is a unit vector and:

We can apply the formula:

| (v ⋅ (u/||u||)) ⋅ (u/||u||) | |

| = | | | = | | | = | |

-

Compute the projection of v onto w where:

We can apply the formula:

| = | | | = | | | = | | | = | | |

(v ⋅ (w/||w||)) ⋅ (w/||w||) | |

| = | | | = | | | = | | | = | |

Fact: Given the points u = [ x1, y1] and v = [ x2, y2], we can find the equation of the line between these two points in the

form y = mx + b.

We recall the definition for a line L defined by two points:

| = | | { p | ∃ a ∈ R, p = a (u - v) + u }.

|

|

Thus, if [ x; y] is on the line, we have:

This implies the following system of equations (one from the x components in the above, and one from the y components):

If we solve for a in terms of x, we can recover a single equation for the line:

| = | | | = | | ((x - x1)/(x1 - x2)) (y1 - y2) + y1 |

| | = | | ((y1 - y2)/(x1 - x2)) (x - x1) + y1

|

|

Notice that we can set m = ( y1 - y2)/( x1 - x2) because that is exactly the slope of the line between [ x1; y1] and [ x2; y2].

We see that we can set b = - m x1 + y1.

2.5. Solving common problems involving vector algebra

We review the operations and properties of vectors introduced in this section by considering several example problems.

Example: Are the vectors [2; 1] and [3; 2] linearly independent?

There are at least two ways we can proceed in checking pairwise linear independence. Both involve checking if the vectors

are linearly dependent. If they are linearly dependent, then they cannot be linearly independent. If they are not linearly

dependent, they must be linearly independent.

We can compare the slopes; we see they are different, so they must be linearly independent.

1/2 ≠ 2/3

We can also use the definition of linear dependence. If they are linearly dependent, then we know that

Does such an a exist? We try to solve for it:

Since we derive a contradiction, there is no such a, so the two vectors are not linearly dependent, which means they

are linearly independent.

Example: Given v = [15; 20], list the vectors that are orthogonal to v, but of the same length as v.

The two constraints on the vectors [x;y] we seek are:

We take the first constraint and solve for x in terms of y.

We now plug this into the second equation.

Thus, the vectors are [-20; 15] and [20; -15].

Example: Given constants a, b, c ∈ R, find a vector orthogonal to the plane P defined

by:

| = | | { | | | a (x + y + z) + b (y + z) + c z = 0 }.

|

|

We only need to rewrite the equation defining the plane in a more familiar form. For any [ x; y; z] ∈ P, we know that:

| a (x + y + z) + b (y + z) + c z | |

| = | | |

ax + (a + b) y + (a + b + c) z | |

| = | | | = | |

In order to be orthogonal to a plane, a vector must be orthogonal to all vectors [ x; y; z] on that plane.

Since all points on the plane are orthogonal to [ a; a + b; a + b + c] by the above argument, [ a; a + b; a + b + c] is such a point.

Example: Define the line L that is orthogonal to the vector [ a; b] but also crosses [ a; b] (i.e, [ a; b] falls on the line).

We know that the line must be parallel to the vector that is orthogonal to [a; b]. The line L0 crossing [0; 0] that is orthogonal

to [a; b] is defined as:

However, we need the line to also cross the point [ a; b]. This is easily accomplished by adding the vector [ a; b] to all the points

on the orthogonal line going through [0; 0] (as defined above). Thus, we have:

If we want to find points [ x ; y ] on the line directly without the intermediate term [ x' ; y' ], we can solve for

[ x' ; y' ] in terms of [ x ; y ]:

We can then substitute to obtain a more direct definition of L (in terms of a constraint on the

vectors [ x ; y ] in L):

| = | | | = | | { ( | | − | | ) + | | | | | ⋅ ( | | − | | ) = 0 } |

| | = | |

Example: Is [7; −1] on the line defined by the points u = [19; 7] and v = [1; −5]?

To solve this problem, we recall the definition for a line defined by two points:

{ p | ∃ a ∈ R, p = a (u - v) + u }.

Thus, we want to know if [7; -1] is in the set defined as above. This can only occur if there exists

an a such that [7; -1] = a (u - v) + u.

We solve for a; if no solution exists, then the point [7; -1] is not on the line L. If an a exists, then it is. In this case,

a = 1/3 is a solution to both equations, so [7; -1] is on the line.

Example: Define the line that is orthogonal to the vector [3; 5] but also crosses [3; 5] (i.e, [3; 5] falls on the line).

We know that the line must be parallel to the vector that is orthogonal to [3; 5]. The line crossing [0; 0] that is orthogonal

to [3; 5] is defined as:

We can rewrite the above in a more familiar form:

However, we need the line to also cross the point [3; 5]. This is easily accomplished by adding the vector [3; 5] to all the points

on the orthogonal line going through [0; 0] (as defined above). Thus, we have:

We can rewrite the above by defining:

Now we substitute [ x; y] with [ x', y'] in our definition of the line:

We can now write the equation for the line:

Notice that, alternatively, we could have instead simply found the y-intercept b ∈ R of the following equation using the point [3;5]:

Example: Is [8; -6] a linear combination of the vectors [19; 7] and [1; -5]?

We recall the definition of a linear combination and instantiate it for this example:

Thus, if we can solve for a and b, then [8; −6] is indeed a linear combination.

Example: Are the vectors V = {[2; 0; 4; 0], [6; 0; 4; 3], [1; 7; 4; 3]} linearly independent?

The definition for linear independence requires that none of the vectors being considered can be expressed

as a sum of the others. Thus, we must check all pairs of vectors against the remaining third vector not in the pair.

There are 3!/(2!*1!) such pairs:

| can | | be expressed as a combination of | | and | | ? |

| |

can | | be expressed as a combination of | | and | | ? |

| |

can | | be expressed as a combination of | | and | | ?

|

|

For each combination, we can check whether the third vector is linearly dependent.

If it is linearly dependent, we can stop and say that the three vectors are not

linearly independent. If it is not, we must continue checking all the pairs. If all the pairs are incapable of

being scaled and added in some way to obtain the third vector, then the three vectors are linearly independent.

not (u, v, w are linearly independent) iff (∃ a,b ∈ R, u,v,w ∈ V, w is a linear combination of u and v)

Notice that this an example of a general logical rule:

not (∀ x ∈ S, p) iff (∃ x ∈ S, not p)

We check each possible combination and find that we derive a contradiction if we assume they are not independent.

Thus, V is a set of linearly independent vectors.

2.6. Using vectors and linear combinations to model systems

In the introduction we noted that in this course, we would define a symbolic language for working with a certain

collection of idealized mathematical objects that can be used to model system states of real-world systems in an abstract

way. Because we are considering a particular collection of objects (vectors, planes, spaces, and their relationships),

it is natural to ask what kinds of problems are well-suited for such a representation (and also what problems are

not well-suited).

What situations and associated problems can be modelled using vectors and related operators and properties?

Problems involving concrete objects that have a position, velocity, direction, geometric shape, and relationships between

these (particularly in two or three dimensions) are natural candidates. For example, we have seen that it is possible

to compute the projection of one vector onto another. However, these are just a particular example of a more general

family of problems that can be studied using vectors and their associated operations and properties.

A vector of real numbers can be used to represent an object or collection of objects with some fixed number of characteristics (each

corresponding to a dimension or component of the vector) where each characteristic has a range of possible values.

This range could be a set of magnitudes (e.g., position, cost, mass), a discrete collection of states (e.g.,

absence or presence of an edge in a graph), or even a set of relationships (e.g., for every cow, there are four

cow legs; for every $1 invested, there is a return of $0.02). Thus, vectors are well-suited for representing

problems involving many instances of objects where all the objects have the same set of possible characteristics

along the same set of linear dimensions. In these instances, many vector operations also have natural interpretations.

For example, addition and scalar multiplication (i.e., linear combinations) typically correspond to the aggregation

of a property across multiple instances or copies of objects with various properties (e.g., the total mass of a

collection of objects).

In order to illustrate how vectors and linear combinations of vectors might be used in applications, we recall the

notion of a system. A system is any physical or abstract phenomenon, or observations of a phenomenon, that

we characterize as a collection of real values along one or more dimensions. A

system state or state a system is a particular collection

of real values. For example, if a system is represented by R4, states of that system are represented by individual vectors

in R4 (note that not all vectors need to correspond to valid or possible states; see the examples below).

Example: Consider the following system: a barn with cows and chickens inside it. There are several dimensions along

which an observer might be able to measure this system (we assume that the observer has such poor eyesight that chickens and

cows are indistinguishable from above):

- number of chickens inside

- number of cows inside

- number of legs that can be seen by peeking under the door

- number of heads that can be seen by looking inside from a high window

Notice that we could represent a particular state of this system using a vector in R4. However, notice also that

many vectors in R4 will not correspond to any system that one would expect to observe. Usually, the number of

legs and heads in the entire system will be a linear combination of two vectors: the number of legs per cow,

and the number of legs per chicken:

Given this relationship, it may be possible to derive some characteristics of the system given only partial information.

Consider the following problem: how many chickens and cows are in a barn if 8 heads and 26 legs were observed?

Notice that a linear combination of vectors can be viewed as a translation from a vector describing one set of

dimensions to a vector describing another set of dimensions. Many problems might exist in which the values are

known along one set of dimensions and unknown along another set.

Example: We can restate the example from the introduction using linear combinations. Suppose we have $12,000 and two

investment opportunities: A has an annual return of 10%, and B has an annual return of 20%. How much should we invest

in each opportunity to get $1800 over one year?

The two investment opportunities are two-dimensional vectors representing the rate of return on a dollar:

The problem is to find what combination of the two opportunities would yield the desired observation of the entire system:

| | | | | 1 dollar | | 0.1 dollars of interest |

| |

|

⋅ x dollars in opportunity A

+ | | | | 1 dollar | | 0.2 dollars of interest |

| |

|

⋅ y dollars in opportunity B

| |

| = | | | | | | 12,000 dollars | | 1800 dollars of interest |

| |

|

|

|

Next, we consider a problem with discrete dimensions.

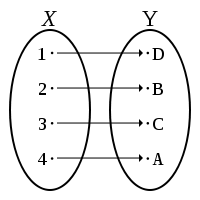

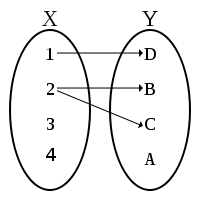

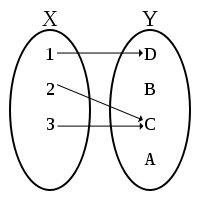

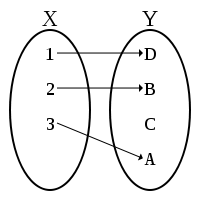

Example: Suppose there is a network of streets and intersections and the city wants to set up cameras

at some of the intersections. Cameras can only see as far as the next intersection. Suppose there are five

streets (#1, #2, #3, #4, #5) and four intersections (A, B, C, and D) at which cameras can be placed, and the city

wants to make sure a camera can see every street while not using any cameras redundantly (i.e., two cameras

should not film the same street).

Vectors in R5 can represent which streets are covered by a camera. A fixed collection of vectors, one for each

intersection, can represent what streets a camera can see from each intersection. Thus, the system's dimensions

are:

- is street #1 covered by a camera?

- is street #2 covered by a camera?

- is street #3 covered by a camera?

- is street #4 covered by a camera?

- is street #5 covered by a camera?

- is there a camera at intersection A? (represented by the variable a below)

- is there a camera at intersection B? (represented by the variable b below)

- is there a camera at intersection C? (represented by the variable c below)

- is there a camera at intersection D? (represented by the variable d below)

Four fixed vectors will be used to represent which streets are adjacent to which intersections:

Placing the cameras in the way required is possible if there is integer solution to the following equation

involving a linear combination of the above vectors:

Example: Suppose a chemist wants to model a chemical reaction. The dimensions of the system might be:

- how many molecules of C3H8 are present?

- how many molecules of O2 are present?

- how many molecules of CO2 are present?

- how many molecules of H2O are present?

- how many atoms of carbon are present?

- how many atoms of hydrogen are present?

- how many atoms of oxygen are present?

Individual vectors in R3 can be used to represent how many atoms of each element are in each type of

molecule being considered:

| C3H8: | | , O2: | | , CO2: | | , H2O: | |

|

|

Suppose we know that the number of atoms in a system may never change during a reaction, and that some quantity

of C3H8 and O2 can react to yield only CO2 and H2O. How many molecules of each compound will be involved in the reaction? That is the solution

to the following linear combination.

For example, suppose we start with 1000 molecules of C3H8 and 5000 molecules of O2. If both of these compounds react to produce only

CO2 and H2O, how many molecules of each will be produced?

Thus, a = 3000 molecules of CO2 and b = 4000 molecules of H2O will be produced.

The notion of a linear combination of vectors is common and can be used to mathematically model a wide variety of problems.

Thus, a more concise notation for linear combinations of vectors would be valuable. This is one of the issues addressed by introducing

a new type of term: the matrix.

3. Matrices

In this section we introduce a new kind of term: a matrix. We define some operations on matrices and some

properties of matrices, and we describe some of the possible ways to interpret and use matrices.

3.1. Matrices and multiplication of a vector by a matrix

Matrices are a concise way to represent and reason about linear combinations and linear independence of vectors (e.g., setwise linear

independence might be difficult to check using an exhaustive approach), reinterpretations of systems using different dimensions,

and so on. One way to interpret a matrix is as a collection of vectors. Multiplying a matrix by a vector corresponds to computing a linear

combination of that collection of vectors.

As an example, we consider the case for linear combinations of two vectors. The two scalars in the linear combination

can be interpreted as a 2-component vector. We can then put the two vectors together into a single object in our

notation, which we call a matrix.

Notice that the columns of the matrix are the vectors used in our linear combination. Notice also that we can now

reinterpret the result of multiplying a vector by a matrix as taking the dot product of each of the matrix rows with the

vector.

Fact:

| = | | | = | | | = | | | = | | | | | | (a,b) ⋅ (x,y) | | (c,d) ⋅ (x,y) |

| |

|

|

|

Because a matrix is just two column vectors, we can naturally extend this definition of multiplication

to cases in which we have multiplication of a matrix by a matrix: we simply multiply each column of the

second matrix by the first matrix and write down each of the resulting columns in the result matrix.

Definition:

| = | | | | | | (a,b) ⋅ (x,y) | (a,b) ⋅(s,t) | | (c,d) ⋅ (x,y) | (c,d) ⋅ (s,t) |

| |

|

|

|

These definitions can be extended naturally to vectors and matrices with more than two components. If we denote using Mij the entry in a matrix M found

in the ith row and jth column, then we can define the result of matrix multiplication of two matrices A and B as a matrix M such that

| Mij = ith row of A ⋅ jth column of B.

|

|

3.2. Interpreting matrices as tables of relationships and transformations of system states

We saw how vectors can be used to represent system states. We can extend this interpretation to matrices and use

matrices to represent relationships between the dimensions of system states. This allows us to interpret matrices as transformations

between system states (or partial observations of system states).

If we again consider the example system involving a barn of cows and chickens, we can reinterpret the matrix as a table of relationships between

dimensions. Each entry in the table has a unit indicating the relationship it represents.

|

chickens |

cows |

|

| heads |

1 head/chicken |

1 head/cow |

|

| legs |

2 legs/chicken |

4 legs/cow |

|

Notice that the column labels in this table represent the dimensions of an "input" vector that could be multiplied by this matrix, and

the row labels specify the dimensions of the "output" vector that is obtained as a result. That is,

if we multiply using the above matrix a vector that specifies the number of chickens and the number of cows in a system state, we will get a

vector that specifies the number of heads and legs we can observe in that system.

| | | | | 1 head/chicken | 1 head/cow | |

2 legs/chicken | 4 legs/cow |

| |

|

⋅

| |

| |

| = | |

Thus, we can interpret multiplication by this matrix as a function that takes system states that only specify the number of chickens and

cows, and converts them to system states that only specify the number of heads and legs:

(# chickens × # cows) → (# heads × # legs)

3.3. Interpreting multiplication of matrices as composition of system state transformations

Example: Suppose that we have a system with the following dimensions.

- number of wind farms

- number of coal power plants

- units of power

- units of cost (e.g., pollution)

- number of single family homes (s.f.h.'s)

- number of businesses

Two different matrices might specify the relationships between some combinations of dimensions in this system.

| M1 = | | | | 100 power/wind farm | 250 power/coal plant | |

50 cost/wind farm | 400 cost/coal plant |

| |

|

,

M2 = | | | | 4 s.f.h./unit power | -2 s.f.h./unit cost | |

1 businesses/unit power | 0 businesses/unit cost |

| |

|

|

|

Notice that these two matrices both represent transformations between partial system state descriptions.

T1: (# wind farms × # coal plants) → (units of power × units of cost)

T2: (units of power × units of cost) → (# s.f.h. × # businesses)

Notice that because the interpretion of a result obtained using the first transformation matches the interpretation of an input to the second, we

can compose these transformations to obtain a third transformation.

T2 o T1: (# wind farms × # coal plants) → (# s.f.h. × # businesses)

This corresponds to multiplying the two matrices to obtain a third matrix. Notice that the units of the resulting matrix can be computed using a

process that should be familiar to you from earlier coursework.

| | | | | 4 s.f.h./unit power | -2 s.f.h./unit cost | |

1 businesses/unit power | 0 businesses/unit cost |

| |

|

⋅

| | | | 100 power/wind farm | 250 power/coal plant | |

50 cost/wind farm | 400 cost/coal plant |

| |

|

| |

| = | |

| | | | 300 s.f.h./wind farm | 200 s.f.h./coal plant | |

100 business/wind farm | 250 businesses/coal plant |

| |

|

|

|

Thus, given some vector describing the number of wind farms and coal plants in the system, we can multiply that vector by (M2 ⋅ M1)

to compute the number of single family homes and business we expect to find in that system.

Example: Suppose that a gram of gold costs $50, while a gram of silver costs $10. After purchasing some of each, you have spent $350 on

15 grams of material. How many grams of each commodity have you purchased?

- Write down four dimensions describing this system.

-

Define a matrix A that can be used to convert a description of a system state that specifies only the amount of gold and silver purchased into

a description of the system state that specifies only the cost and total weight.

- Write down a matrix equation describing this problem and solve it to find the solution.

-

Define a matrix B such that for any description of a system state v that specifies only the total weight and amount spent, B ⋅ v

is a description of that system state that specifies the amount of gold and silver in that system state.

Example: Suppose we characterize our system in terms of two dimensions:

- number of single family homes (s.f.h.'s)

- number of power plants (p.p.'s)

In this example, instead of studying the relationships between dimensions, we want to study how the dimensions

change (possibly in an interdependent way) over time. For example, the following matrix might capture how

the system state evolves from year to year:

| M = | | | | 2 s.f.h. in year 1/s.f.h. in year 0 | -1 s.f.h. in year 1/p.p. in year 0 | |

0 p.p. in year 1/s.f.h in year 0 | 1 p.p. in year 1/p.p. in year 0 |

| |

|

|

|

We can parameterize the above in terms of a year t ∈ R. Notice that the matrix above is just a special case of the matrix below (when t = 0):

| M = | | | | 2 s.f.h. in year t+1/s.f.h. in year t | -1 s.f.h. in year t+1/p.p. in year t | |

0 p.p. in year t+1/s.f.h in year t | 1 p.p. in year t+1/p.p. in year t |

| |

|

|

|

What does M ⋅ M represent? If we consider the units, we have:

| M ⋅ M = | | | | 4 s.f.h. in year t+2/s.f.h. in year t | -3 s.f.h. in year t+2/p.p. in year t | |

0 p.p. in year t+2/s.f.h in year t | 1 p.p. in year t+2/p.p. in year t |

| |

|

|

|

Suppose v = [ x; y] ∈ R2 represents the number of single family homes and factories in a given year. We can then define the number of single

family homes and factories after t years as:

If we wanted to write the number of single family homes and factories as a function of t ∈ R and an initial state x0, y0 ∈ R, we

could nest the dot products as follows and use algebra to simplify:

| = | | 2 ( 2 ( 2 ( ... 2 (2 x0 - y0) - y0 ... ) - y0) - y0) - y0 |

| | = | | 2t x0 - (2t-1 + ... + 4 + 2 + 1) y0 |

| | = | | | = | | | = | | 0 ⋅ xt + 1 ⋅ ( ... (0 ⋅ x2 + 1 ⋅ ( 0 ⋅ x1 + (0 ⋅ x0 + 1 ⋅ y0))) ... ) |

| | = | |

3.5. Matrix operations and their interpretations

The following table summarizes the matrix operations that we are considering in this course.

| term |

definition |

restrictions |

general properties |

| M1 + M2 |

component-wise |

matrices must have

the same number of rows

and columns |

commutative,

associative,

has identity (matrix with all 0 components),

has inverse (multiply matrix by -1),

scalar multiplication is distributive

|

| M1 ⋅ M2 |

row-column-wise

dot products |

columns in M1 = rows in M2

rows in M1 ⋅ M2 = rows in M1

columns in M1 ⋅ M2 = columns in M2 |

associative,

has identity I (1s in diagonal and 0s elsewhere),

distributive over matrix addition,

not commutative in general,

no inverse in general

|

| M -1 |

|

columns in M = rows in M

matrix is invertible

|

M -1 ⋅ M = M ⋅ M -1 = I

|

The following tables list some high-level intuitions about how matrix operations can be understood.

level of

abstraction |

interpretations of multiplication

of a vector by a matrix |

| applications |

transformation of

system states |

extraction of information

about system states |

computing properties of

combinations or aggregations

of objects (or system states) |

conversion of system

state observations

from one set of dimensions

to another |

| geometry |

"moving" vectors in

a space (stretching,

skewing, rotating,

reflecting) |

projecting vectors |

taking a linear combination

of two vectors |

reinterpreting vector notation

as referring to a collection

of non-canonical vectors |

level of

abstraction |

interpretations of multiplication of two matrices |

| applications |

composition of system state

transformations or conversions |

| geometry |

sequencing of motions of vectors within

a space (stretching, skewing, rotating,

reflecting) |

level of

abstraction |

invertible matrix |

singular matrix |

| applications |

reversible transformation

of system states |

extraction of complete

information uniquely determining

a system state |

irreversible transformation

of system states |

extraction of incomplete

information about

a system state |

| geometry |

reversible transformation or motion

of vectors in a space |

projection onto a strict subset of

a set of vectors (space) |

| symbolic |

reversible transformation of

information numerically encoded in matrix

(example of such information: system of

linear equations encoded as matrix)

|

irreversible/"lossy" transformation of

information encoded in matrix |

Suppose we interpret multiplication of a vector by a matrix M as a function from vectors to vectors:

f(v) = M v.

Fact: Notice that for any M, if f(v) = M v then f is always a function because M v has only one possible result (i.e.,

matrix multiplication is deterministic) for a given M and v.

Fact: If f( v) = M v, then f is invertible if M is an invertible matrix. The inverse of f is then defined to be:

f -1(v) = M -1 v.

Notice that f -1 is a function because M -1 v only has one possible result. Notice that f -1 is the inverse of f because

f -1(f(v)) = M -1 M v = I v = v.

Fact: If the columns of a matrix M are linearly dependent and f( v) = M v, then M cannot have an inverse. We consider the case

in which M ∈ R2×2. Suppose we have that

If the columns of M are linearly dependent, then we know that there is some s ∈ R such that

This means that we can rewrite M:

Since matrix multiplication can be interpreted as taking a linear combination of the column vectors, this means that for x, y ∈ R,

But this means that for any two vectors [ x; y] and [ x'; y'], if x + sy = x' + sy' then multiplying by M will lead to the same result.

Thus, f is a function that takes two different vector arguments and maps them to the same result. If we interpret f as a relation and take

its inverse f -1, f -1 cannot be a function.

Thus, M cannot have an inverse (if it did, then f -1 would be a function).

Fact: If the columns of a matrix M are linearly independent and f( v) = M v, then M has an inverse. We consider the case

in which M ∈ R2×2. Suppose we have that

If the columns of M are linearly independent, then we know that a d - b % c ≠ 0:

Suppose we pick the following M -1:

The we have that:

| = | | | = | | (1/(a d - b c)) ⋅ | | | | a d - b c | bd - bd | | ac - ac | a d - b c |

| |

| |

| | = | | | = | |

Example: Solve the following problems.

- Determine which of the following matrices are not invertible:

The columns of the first matrix are linearly dependent, so it is not invertible. The first column of

the second matrix can be obtained by multiplying the second column by 0, so the two columns of that

matrix are linearly dependent; thus, it is not invertible. For the third matrix, the following equation

has no solution:

Thus, the third matrix is invertible. It is also possible to determine this by computing the

determinant for each matrix.

-

The matrix below is not invertible, and the following equation is true. What is the matrix? List all four of its components (real numbers).

Since the matrix is not invertible, it must be that its determinant is 0. Thus, we have the

following system of equations:

If we solve the above, we get:

3.6. Matrix properties

The following are subsets of Rn×n that are of interest in this course because they

correspond to transformations and systems that have desirable or useful properties. For some of these

sets of matrices, matrix multiplication and inversion have properties that they do not in general.

| subset of Rn×n |

definition |

closed under

matrix

multiplication |

properties of

matrix multiplication |

inversion |

| identity matrix |

∀ i,j

Mij = 1 if i=j, 0 otherwise |

closed |

commutative,

associative,

distributive with addition,

has identity |

has inverse (itself);

closed under inversion |

| elementary matrix |

can be obtained via an

elementary row operation

from I:

- add nonzero multiple

of one row of the matrix

to another row

- multiply a row by a

nonzero scalar

- swap two rows of the

matrix

Note: the third is a combination

of the first two operations.

|

|

associative,

distributive with addition,

have identity |

have inverses;

closed under inversion |

| scalar matrices |

∃ s ∈ R, ∀ i,j

Mij = s if i=j, 0 otherwise |

closed |

commutative,

associative,

distributive with addition,

have identity |

nonzero members

have inverses;

closed under inversion |

| diagonal matrices |

∀ i,j

Mij ∈ R if i=j, 0 otherwise |

closed |

associative,

distributive with addition,

have identity |

nonzero members

have inverses;

closed under inversion |

matrices with

constant diagonal |

∀ i,j

Mii = Mjj |

|

associative,

distributive with addition,

have identity |

|

| symmetric matrices |

∀ i,j

Mij = Mji |

|

associative,

distributive with addition,

have identity |

|

symmetric matrices

with constant diagonal |

∀ i,j

Mii = Mjj and Mij = Mji |

closed |

commutative,

associative,

distributive with addition,

have identity |

|

| upper triangular matrices |

∀ i,j

Mij = 0 if i > j |

closed |

associative,

distributive with addition,

have identity |

not invertible in general;

closed under inversion

when invertible |

| lower triangular matrices |

∀ i,j

Mij = 0 if i < j |

closed |

associative,

distributive with addition,

have identity |

not invertible in general;

closed under inversion

when invertible |

| invertible matrices |

∃ M -1 s.t. M -1 M = M M -1 = I |

closed |

associative,

distributive with addition,

have identity |

nonzero members

have inverses;

closed under inversion |

| square matrices |

all of Rn×n |

closed |

associative,

distributive with addition,

have identity |

|

Two facts presented in the above table are worth noting.

Fact: Suppose A is invertible. Then the inverse of A -1 is A, because A A -1 = I. Thus, (A -1) -1 = A.

Fact: If A and B are invertible, so is AB. That is, invertible matrices are closed under matrix multiplication. We can show

this by using the associativity of matrix multiplication. Since A and B are invertible, there exist B -1 and A -1 such that:

Thus, since there exists a matrix (B -1 A -1) such that (B -1 A -1) A B = I, (B -1 A -1) is the inverse of AB.

Example:

Given an invertible upper triangular matrix M ∈ R2×2,

show that M -1 is also upper triangular. Hint: write out

the matrix M explicitly.

Suppose we have the following upper triangular matrix:

If it is invertible, then there exists an inverse such that:

This implies that

Because M is invertible, we know that z ≠ 0. Since zc = 0, This means that c = 0. Thus, we have that the inverse is upper triangular.

Alternatively, we can observe that c = 0, and that using the formula for the inverse would yield a lower-left

matrix entry equal to −c/(det M) = 0.

Example: In terms of a, b ∈ R where a ≠ 0 and b ≠ 0, compute the inverse of:

| | | | | | | |

While we could perform the instances of matrix multiplication step-by-step and then invert the result (either by solving an equation or

using the formula for the inverse of a matrix in R2×2), it's easier to recall that diagonal matrices behave in a manner that is very

similar to the real numbers. Thus, the above product is equal to

| | ,

and its inverse simply has the multiplicative inverses of the two diagonal entries as its diagonal entries:

| | .

Another fact not in the table is also worth noting.

Fact: Any product of a finite number of elementary row matrices is invertible. This fact follows from the fact that all

elementary matrices are invertible, and that the set of invertible matrices is closed under multiplication.

Given the last fact, we might ask whether the opposite is true: are all invertible matrices the product of a finite number of elementary

matrices? The answer is yes, as we will see further below.

3.7. Solving the equation M v = w for M with various properties

Recall that for v,w ∈ Rn and M ∈ Rn×n, an equation of the following form

can represent a system of equations:

M v = w

Notice that if M is invertible, we can solve for v by multiplying both sides by M -1. More generally, if M is a member of

some of the sets in the above table, we can find straightforward algorithms for solving such an equation for v.

| M is ... |

algorithm to solve M v = w for v |

| the identity matrix |

w is the solution |

| an elementary matrix |

perform a row operation on M to obtain I;

perform the same operation on w |

| a scalar matrix |

divide the components of w by the scalar |

| a diagonal matrix |

divide each component of w by the

corresponding matrix component |

| an upper triangular matrix |

start with the last entry in v, which is easily obtained;

move backwards through v, filling in the values by

substituting the already known variables

|

| a lower triangular matrix |

start with the first entry in v, which is easily obtained;

move forward through v, filling in the values by

substituting the already known variables

|

product of a lower triangular matrix

and an upper triangular matrix |

combine the algorithms for upper and lower triangular

matrices in sequence (see example below)

|

| an invertible matrix |

compute the inverse and multiply w by it |

Example: We consider an equation M v = w where M is a diagonal matrix (the identity matrix and all scalar matrices are also diagonal

matrices).

Example: We consider an equation M v = w where M is a lower triangular matrix.

Fact: Suppose that M = L U where L is lower triangular and U is upper triangular. How can we solve M v = w?

First, note that because matrix multiplication is associative, we have

We introduce a new vector for the intermediate result U v, which we call u. Now, we have a system of matrix equations.

We first solve for u using the algorithm for lower triangular matrices, then we solve for v using the algorithm for upper triangular

matrices.

Example: Solve the following equation for x, y, z ∈ R:

We first divide the problem into two steps using the intermediate vector [ a ; b ; c ]:

Example: The inverse of a matrix in R2×2 can be computed as follows.

| = | | | | | | d/(ad − bc) | −b/(ad-bc) | | −c/(ad − bc) | a/(ad − bc) |

| |

|

|

|

Thus, if we find that the determinant of a matrix M ∈ R2×2 is nonzero, the algorithm for solving M v = w is

straightforward. Consider the following example.

| = | | | = | | | = | |

| | | | 4/((1⋅4)-(2⋅3)) | −2/((1⋅4)-(2⋅3)) | | −3/((1⋅4)-(2⋅3)) | 1/((1⋅4)-(2⋅3)) |

| |

| | | |

| | = | | | = | |

3.8. Row echelon form and reduced row echelon form

We define two more properties that a matrix may possess.

| M is in row echelon form |

- all nonzero rows are above any rows consisting of all zeroes

- the first nonzero entry (from the left) of a nonzero row is strictly

to the right of the first nonzero entry of the row above it

- all entries in a column below the first nonzero entry in a row are zero

(the first two conditions imply this)

|

| M is in reduced row echelon form |

- M is in row echelon form

- the first nonzero entry in every row is 1;

this 1 entry is the only nonzero entry in its column

|

We can obtain the reduced row echelon form of a matrix using a sequence of appropriately chosen elementary row operations.

Example: Suppose we want to find the reduced row echelon form of the matrix below. We list the steps of the procedure.

Fact: For any matrix M, there may be more than one way to reach the reduced row echelon form using elementary row operations. However,

it is always possible, and there is exactly one unique reduced row echelon form for that matrix M. We do not prove this result in this course,

but fairly short proofs by induction can be found elsewhere (such as here).

Because the reduced row echelon form of a matrix M is unique, we use the following notation to denote it:

rref M.

Example: Determine which of the following matrices are elementary:

The matrices are:

(a) not elementary (not square, so not invertible),

(b) not elementary (composition of two elementary row operations applied to the identity),

(c) not elementary (multiplication of a row by the 0 scalar is not invertible),

and (d) elementary (multiple of first row added to last row).

Example: Determine which of the following matrices are in reduced row echelon form:

The matrices are:

(a) in reduced row echelon form,

(b) not in reduced row echelon form,

(c) in reduced row echelon form,

(d) not in reduced row echelon form,

and (e) in reduced row echelon form.

Example: Suppose the matrix below is in reduced row echelon form. Solve for a, b ∈ R.

Without loss of generality, we can focus on four possibility: a is either zero or nonzero, and

b is either zero or nonzero. We can further simplify this by considering 1 as the only nonzero

value of interest. Then we have that:

- if a = 0 and b = 0, then the matrix is not in reduced row echelon form;

- if a = 0 and b = 1, then the matrix is not in reduced row echelon form;

- if a = 1 and b = 0, then the matrix is in reduced row echelon form;

- if a = 1 and b = 1, then the matrix is not in reduced row echelon form.

Thus, a = 1 and b = 0.

Question: For a given matrix in reduced row echelon form, how many different matrices can be reduced to it (one or more than one)?

Fact: It is always true that rref M = rref (rref M). Thus, the rref operation is idempotent.

Fact: If rref M is I (the identity matrix), then M is invertible. This is because I is an elementary matrix, and the row operations

used to obtain rref M from M can be represented as a product of elementary (and thus, invertible) matrices E1 ⋅ ... ⋅ En. Thus, we have:

| = | | |

(E -1n ⋅ ... ⋅ E -11) ⋅ E1 ⋅ ... ⋅ En ⋅ M | |

| = | | (E -1n ⋅ ... ⋅ E -11) ⋅ I |

| | = | | (E -1n ⋅ ... ⋅ E -11) ⋅ I

|

|

Since elementary matrices and I are invertible, and invertible matrices are closed under matrix multiplication, M must be invertible, too.

Fact: A matrix M ∈ Rn×n is not invertible iff the bottom row of rref M has all zeroes. This is because when all

rows of rref M ∈ Rn×n have at least one nonzero value, rref M must be the identity matrix (try putting a nonzero value on the bottom row, and see what the

definition of reduced row echelon form implies about the rest of the matrix). Since rref M = I, this implies that M must then be invertible by the fact immediately above this one.

Fact: For any invertible matrix M ∈ Rn×n, the reduced row echelon form of M is I ∈ Rn×n.

We can show this is true using a proof by contradiction. Suppose that M is invertible, but rref M ≠ I. We know that rref M can be

obtained via a finite number of elementary row operations E1 ⋅ ... ⋅ En:

(E1 ⋅ ... ⋅ En) M = rref M.

If rref M is not I, then the last row of rref M must consist of only zeroes. But because M is invertible, we have:

Since the product of elementary matrices is invertible, (rref M) ⋅ M -1 is also invertible. But if the last row of rref M consists of only

zeroes, then the last row of (rref M) M -1 also contains only zeroes, so it cannot be that (rref M) M -1 is invertible. Thus, we have a contradiction, so our assumption that rref M ≠ I is false.

The following table provides an alternative illustration of how the contradiction is derived:

| M is invertible |

rref M ≠ I |

| the matrix M -1 exists |

the last row of rref M is all zeroes |

| (E1 ⋅ ... ⋅ En) M = rref M |

| ((E1 ⋅ ... ⋅ En) M) M -1 = (rref M) ⋅ M -1 |

| E1 ⋅ ... ⋅ En = (rref M) ⋅ M -1 |

the last row of (rref M) ⋅ M -1

is all zeroes |

(rref M) ⋅ M -1 is invertible

because it is a product of the

invertible matrices E1, ..., En |

(rref M) ⋅ M -1 is not invertible

because multiplication by it is

a many-to-one function |

The above result implies the following fact.

Fact: If a matrix M is invertible, it is the product of a finite number of elementary matrices. This is because rref M is

the identity, which is an elementary matrix, and M can be reduced via a finite number of invertible row operations to I. Thus, the

elementary matrices can be used to generate every possible invertible matrix.

Example: Suppose that for some matrix M ∈ R2×2, the following row operations can be applied to M (in the order

specified) to obtain the identity matrix I:

- add the bottom row to the top row;

- swap the two rows;

- multiply the bottom row by 1/3.

Find the matrix M -1.

We know that performing the three row operations on M will result in I. Thus,

we can write out the row operations as three matrices E1, E2, and E3:

Thus, we have that:

We can also find M:

The following table summarizes the results.

| fact |

justification |

| (1) |

{M | M is a finite product of elementary matrices} |

= |

{M | rref M = I} |

I is an elementary matrix;

sequences of row operations

are equivalent to multiplication by

elementary matrices |

| (2) |

{M | M is a finite product of elementary matrices} |

⊂ |

{M | M is invertible} |

elementary matrices are invertible;

products of invertible matrices are invertible |

| (3) |

{M | rref M = I} |

⊂ |

{M | M is invertible} |

fact (1) in this table;

fact (2) in this table;

transitivity of equality |

| (4) |

{M | M is invertible} |

⊂ |

{M | rref M = I} |

proof by contradiction;

non-invertible M implies

rref M has all zeroes in bottom row |

| (5) |

{M | M is invertible} |

= |

{M | rref M = I} |

for any sets A,B,

A ⊂ B and B ⊂ A

implies A = B |

| (6) |

{M | M is a finite product of elementary matrices} |

= |

{M | M is invertible} |

fact (1) in this table;

fact (5) in this table;

transitivity of equality |

Given all these results, we can say that the properties for a matrix M in the following table are all equivalent:

if any of these is true, then all of them are true. If any of these is false, then all of them are false.

| M is invertible |

| det M ≠ 0 |

| the columns of M are (setwise) linearly independent |

| Mv = w has exactly one solution |

| M is a finite product of elementary matrices |

Fact: For matrices M ∈ Rn×n, rref M is guaranteed to be upper triangular. Notice that this means that for some finite

product of elementary matrices E1 ⋅ ... ⋅ En, it is the case that

M = (E1 ⋅ ... ⋅ En) ⋅ U.

If E1 ,..., En are all lower triangular, then then M has an LU decomposition. However, this will not always be the case. But recall that

E1 ,..., En are all elementary matrices.

Fact: Given any product of elementary matrices E1 ⋅ ... ⋅ En, it is possible to find a lower triangular matrix

L by applying a finite number of elementary swap operations

S1 ,..., Sn such that L = S1 ⋅ ... ⋅ Sn ⋅ E1 ⋅ ... ⋅ En is lower triangular; then we have:

| = | | S1 ⋅ ... ⋅ Sn ⋅ E1 ⋅ ... ⋅ En |

| |

(Sn -1 ⋅ ... ⋅ S1 -1) ⋅ L | |

| = | |

Thus, E1 ⋅ ... ⋅ En can be decomposed into a lower triangular matrix and a product of elementary swap matrices.

Fact: Any product of a finite number of swap elementary matrices S1 ,..., Sn is a permutation matrix.

Fact: Any matrix M can be written as the product of three matrices P ⋅ L ⋅ U where P is a permutation matrix, L is a lower-triangular matrix, and U is an upper-triangular matrix,

where:

Example: Suppose we have a system in which a single object is traveling along one spatial dimension (i.e., in a straight line).

The object has a distance travelled, a velocity, and an acceleration; these are the three dimensions of the system:

- distance (m)

- velocity (m/s)

- acceleration (m/s2)

Consider the following equations that can be used to compute the distance the object travels and its final velocity given its

acceleration a ∈ R+, initial velocity v0 ∈ R+, and the amount of time t ∈ ∈ R+ it travels.

Suppose we want to convert a view of the system state that describes the acceleration and velocity at time 0 into a view of the system

state that represents the distance travelled and velocity at time t. This conversion operation can be represented using a matrix M.

| = | | | | | | 0.5 t2 dist. at t+1 / accel. at t | t dist. at t+1 / vel. at t | |

t vel. at t+1 / accel. at t | 1 vel. at t+1 / vel. at t |

| |

| |

| | = | | | | | | 0.5 t2 m / (m/s2) | t m / (m/s) | |

t (m/s) /(m/s2) | 1 (m/s)/(m/s) |

| |

| |

| | = | | | | | | 0.5 t2 s2 | t s | |

t s | 1 scalar identity |

| |

|

|

|

This matrix is invertible. This immediately tells us that it is possible to derive the initial

acceleration and velocity given only the amount of time that has elapsed,

the distance travelled, and the final velocity.

By computing the inverse of this matrix, we can obtain formulas that allow us to derive the

initial velocity and acceleration of an object given how much time has passed, how far it has travelled, and its current velocity.

| = | |

| | | | -2/t2 accel. at t / dist. at t+1

| t accel. at t / vel. at t+1

| | t vel. at t / dist. at t+1

| 1 vel. at t / vel. at t+1

|

| |

| |

| | = | |

| | | | -2/t2 (m/s2)/m

| 2/t (m/s2) / (m/s)

| | 2/t (m/s) / m

| -1 (m/s)/(m/s)

|

| |

| |

| | = | |

| | | | -2/t2 1/s2

| 2/t 1 / s

| | 2/t 1 / s

| -1 scalar identity

|

| |

|

|

|

The formulas can be obtained by multiplying M -1 by a system state describing the distance travelled and current velocity.

This yields:

We could have also obtained the above formulas using manipulation of equations of real numbers,

or via the corresponding row operations. Let us consider the latter approach:

| = | | |

| | | | 0.5 t2 | t | | t − (2/t ⋅ 0.5 t2) | 1 − (2/t ⋅ t) |

| |

| ⋅ | | | |

| = | | | = | | | = | | |

| | | | 0.5 t2 − (t ⋅ 0) | t − (t ⋅ 1) | | 0 | 1 |

| |

| ⋅ | | | |

| = | | | | | | d − (t ⋅ ((2d/t) − v)) | | (2d/t) − v |

| |

| |

| | = | | | = | | | | | | (2/t2) ⋅ (− d + v t) | | (2d / t) − v |

| |

| |

| | = | |

3.9. Matrix transpose

Definition: The transpose of a matrix M ∈ Rn×n, denoted M⊤, is defined to be A such that for all i and j, Aij = Mji.

Fact: If a matrix M is scalar, diagonal, or symmetric, M⊤ = M. If a matrix M is upper triangular, M⊤ is lower triangular (and vice versa).

Example: Below are some examples of matrices and their transposes:

Fact: For A, B ∈ Rn×n, it is always the case that:

Fact: It is always the case that ( AB) ⊤ = B⊤ A⊤. If M = AB, then:

| = | | ith row of A ⋅ jth column of B |

| | = | | ith column of A⊤ ⋅ jth row of B⊤ |

| | = | | jth row of B⊤ ⋅ ith column of A⊤ |

| | = | |

Example: We consider the following example with matrices in R3× 3. Let a, b, c, x, y, and z be vectors in R3, with

vi representing the ith entry in a vector v. Suppose we have the following product of two matrices. Notice that a, b, and c are the rows of the left-hand matrix,

and x, y, and z are the columns of the right-hand matrix.

| | | | | a1 | a2 | a3 | | b1 | b2 | b3 | | c1 | c2 | c3 |

| |

|

⋅

| | | | x1 | y1 | z1 | | x2 | y2 | z2 | | x3 | y3 | z3 |

| |

|

| |

| = | |

| | | | (a1, a2, a3) ⋅ (x1, x2, x3) | (a1, a2, a3) ⋅ (y1, y2, y3) | (a1, a2, a3) ⋅ (z1, z2, z3)

| | (b1, b2, b3) ⋅ (x1, x2, x3) | (b1, b2, b3) ⋅ (y1, y2, y3) | (b1, b2, b3) ⋅ (z1, z2, z3)

| | (c1, c2, c3) ⋅ (x1, x2, x3) | (c1, c2, c3) ⋅ (y1, y2, y3) | (c1, c2, c3) ⋅ (z1, z2, z3)

|

| |

| |

| | = | | | | | | a ⋅ x | a ⋅ y | a ⋅ z

| | b ⋅ x | b ⋅ y | b ⋅ z

| | c ⋅ x | c ⋅ y | c ⋅ z

|

| |

|

|

|

Suppose we take the transpose of both sides of the equation above. Then we would have:

|

( | | | | a1 | a2 | a3 | | b1 | b2 | b3 | | c1 | c2 | c3 |

| |

|

⋅

| | | | x1 | y1 | z1 | | x2 | y2 | z2 | | x3 | y3 | z3 |

| |

| )⊤

| |

| = | |

| | | | a ⋅ x | a ⋅ y | a ⋅ z

| | b ⋅ x | b ⋅ y | b ⋅ z

| | c ⋅ x | c ⋅ y | c ⋅ z

|

| |

| ⊤ |

| | = | | | | | | a ⋅ x | b ⋅ x | c ⋅ x

| | a ⋅ y | b ⋅ y | c ⋅ y

| | a ⋅ z | b ⋅ z | c ⋅ z

|

| |

| |

| | = | |

| | | | x ⋅ a | x ⋅ b | x ⋅ c

| | y ⋅ a | y ⋅ b | y ⋅ c

| | z ⋅ a | z ⋅ b | z ⋅ c

|

| |

| |

| | = | |

| | | | x1 | x2 | x3

| | y1 | y2 | y3

| | z1 | z2 | z3 |

| |

|

⋅

| | | | a1 | b1 | c1

| | a2 | b2 | c2

| | a3 | b3 | c3 |

| |

| |

| | = | |

| | | | x1 | y1 | z1 | | x2 | y2 | z2 | | x3 | y3 | z3 |

| |

| ⊤

⋅

| | | | a1 | a2 | a3 | | b1 | b2 | b3 | | c1 | c2 | c3 |

| |

| ⊤

|

|

Fact: If A is invertible, then so is A⊤. This can be proven using the fact directly above.

\forall A,B \in \R^(2 \times 2),

`(A) is invertible`

\implies

(A^(-1)) * A = [1 , 0; 0 , 1]

A^\t * (A^(-1))^\t = ((A^(-1)) * A)^\t

`` = [1,0;0,1]^\t

`` = [1,0;0,1]

Fact: det A = det A⊤. We can see this easily in the A ∈ R2×2 case.

\forall a,b,c,d \in \R,

\det [a,b;c,d] = a d-b c

`` = a d - c b

`` = \det [a,c;b,d]

\det [a,b;c,d] = \det [a,c;b,d]

3.10. Orthogonal matrices

Definition: A matrix M ∈ Rn×n is orthogonal iff M⊤ = M -1.

Fact: The columns of orthogonal matrices are always orthogonal unit vectors.

The rows of orthogonal matrices are always orthogonal unit vectors.

We can see this in the R2×2 case. For columns, we use the fact that

M⊤ ⋅ M = I:

| = | | | = | | |

| | | | (a,c) ⋅ (a,c) | (a,c) ⋅ (b,d) | | (b,d) ⋅ (a,c) | (b,d) ⋅ (b,d) |

| |

| | |

| = | |

For rows, we use that M ⋅ M⊤ = I:

| = | | | = | | |

| | | | (a,b) ⋅ (a,b) | (a,b) ⋅ (c,d) | | (c,d) ⋅ (a,b) | (c,d) ⋅ (c,d) |

| |

| | |

| = | |

Below, we provide a verifiable argument of the above fact for the R2×2 case.

\forall a,b,c,d \in \R,

[a,b;c,d] * [a,b;c,d]^\t = [1,0;0,1]

\implies

[a,b;c,d] * [a,c;b,d] = [1,0;0,1]

[a a + b b, a c + b d; c a + d b, c c + d d] = [1,0;0,1]

a a + b b = 1

[a;b] * [a;b] = 1

||[a;b]|| = 1

`([a;b]) is a unit vector`

c c + d d = 1

[c;d] * [c;d] = 1

||[c;d]|| = 1

`([c;d]) is a unit vector`

a c + b d = 0

[a;b] * [c;d] = 0

`([a;b]) and ([c;d]) are orthogonal`

Fact: Matrices representing rotations and reflections are orthogonal. We can show this for the general (counterclockwise) rotation matrix:

| = | | | = | | | | | | cos 2 θ + sin 2 θ | -cos θ sin θ + sin θ cos θ | | -sin θ cos θ + cos θ sin θ | sin 2 θ + cos 2 θ |

| |

| |

| | = | |

Example: Suppose that the hour hand of an animated

12-hour clock face is represented using a vector [ x ; y ]. To

maintain the time, the coordinates [ x ; y ] must be updated once

every hour by applying a matrix M to the vector representing

the hour hand. What is the matrix M?

Since the hour hand must be rotated by 360/12 = 30 degrees in the clockwise direction while

the rotation matrix represents counterclockwise rotation, we actually want to find the

rotation matrix for 360 - (360/12) = 330 degrees.

Thus, M must be the rotation matrix for θ = 2π - (2π / 30), where θ is specified

in radians. Thus, we have:

| = | | | = | | | = | | | | | | cos (330/360 ⋅ 2π) | −sin (330/360 ⋅ 2π) | | sin (330/360 ⋅ 2π) | cos (330/360 ⋅ 2π) |

| |

| |

| | = | |

Example: You are instructed to provide a simple algorithm for

drawing a spiral on the Cartesian plane. The spiral is obtained using

counterclockwise rotation, and for every 360 degree

turn of the spiral, the spiral arm's distance from the origin

should double. Provide a matrix M ∈ R2×2 that will

take any point [ x ; y ] on the spiral and provide the next point

[ x' ; y' ] after θ radians of rotation (your definition of

M should contain θ).

It is sufficient to multiply a rotation matrix by a scalar

matrix that scales a vector according to the angle of

rotation. If the angle of rotation is θ, then the

scale factor s should be such that:

In the above, if n = π/θ then s is the nth root of 2.

Thus M would be:

| = | | | | | | 2θ/(2π) cos θ

| − (2θ/(2π)) sin θ

| | 2θ/(2π) sin θ

| 2θ/(2π) cos θ |

| |

| |

| |

Fact: Orthogonal matrices are closed under multiplication. For orthogonal A, B ∈ Rn×n we have:

Fact: Orthogonal matrices are closed under inversion and transposition. For orthogonal A ∈ Rn×n we have:

Thus, we can show that both A -1 and A⊤ are orthogonal.

We can summarize this by adding another row to our table of matrix subsets.

| subset of Rn×n |

definition |

closed under

matrix

multiplication |

properties of

matrix multiplication |

inversion |

| orthogonal matrices |

M⊤ = M -1 |

closed |

associative,

distributive with addition,

have identity |

nonzero members

have inverses;

closed under inversion |

Example: Let A ∈ R2×2 be an orthogonal matrix. Compute A A⊤ A.

We recall that because A is orthogonal, A⊤ = A -1. Thus, we have that

A A⊤ A = (A A -1) A = I A = A.

Fact: Suppose the last row of a matrix M ∈ Rn×n consists of all zeroes. Show that M is not invertible.

Suppose M is invertible. Then M⊤ is invertible. But the last column of M is all zeroes, which means it is a trivial linear

combination of the other column vectors of M. Thus, we have a contradiction, so M cannot be invertible.

3.11. Matrix rank

Yet another way to characterize matrices is by considering their rank.

Definition: We can define a the rank of a matrix M, rank M, as the number of nonzero rows in rref M.

We will see in this course that rank M is related in many ways to the various characteristics of a matrix.

Fact: Matrix rank is preserved by elementary row operations. Why is this the case? Because rref is idempotent and rank is defined in

terms of it:

| = | | |

number of nonzero rows in rref M | |

| = | | number of nonzero rows in rref (rref M) |

| | = | |

Fact: If M ∈ Rn×n is invertible, rank M = n. How can we prove this? Because all invertible matrices

have rref M = I, and I has no rows with all zeroes. If I ∈ Rn×n, then I has n nonzero rows.

Fact: For A ∈ Rn×n, rank A = rank (A⊤).

We will not prove the above in this course in general, but typical proofs

involve first proving that for all A ∈ Rn×n, rank A ≤ rank (A⊤). Why is this sufficient to complete the proof? Because we

can apply this fact for both A and A⊤ and use the fact that (A⊤)⊤ = A:

To get some intuition about the previous fact, we might consider the following question: if the columns of a matrix M ∈ R2×2

are linearly independent, are the rows also linearly independent?

Fact: The only matrix in Rn×n that has no nonzero rows in reduced row echelon form is I.

Fact: If a matrix M ∈ Rn×n has rank n, then it must be that rref M = I (and, thus, M is invertible). This is derived from

the fact above.

Fact: Invertible matrices are closed under the transposition operation. In other words, if M is invertible, then M⊤ is invertible. How

can we prove this using the rank operator? We know that rank is preserved under transposition, so we have:

Thus, the rank of rank(A⊤) is n, so it is invertible.

The following table summarizes the important facts about the rank and rref operators.

| rank (rref M) = rank M |

| rref (rref M ) = rref M |

| rank (M⊤) = rank M |

| for M ∈ Rn×n, M is invertible iff rank M = n |

Review 1. Vector and Matrix Algebra and Applications

The following is a breakdown of what you should be able to do at this point in the course

(and of what you may be tested on in an exam).

Notice that many of the tasks below can be composed.

This also means that many problems can be solved in more than one way.

- vectors

- definitions and algebraic properties of scalar and vector operations (addition, multiplication, etc.)

- vector properties and relationships between vectors

- dot product of two vectors

- norm of a vector

- unit vectors

- orthogonal projection of a vector onto another vector

- orthogonal vectors

- linear dependence of two vectors

- linear independence of two vectors

- linear combinations of vectors

- linear independence of three vectors

- lines and planes

- line defined by a vector and the origin ([0; 0])

- line defined by two vectors

- line in R2 defined by a vector orthogonal to that line

- plane in R3 defined by a vector orthogonal to that plane

- matrices

- algebraic properties of scalar and matrix multiplication and matrix addition

- collections of matrices and their properties (e.g., invertibility, closure)

- identity matrix

- elementary matrices

- scalar matrices

- diagonal matrices

- upper and lower triangular matrices

- matrices in reduced row echcelon form

- determinant of a matrix in R2×2

- inverse of a matrix and invertible matrices

- other matrix operations and properties

- determine whether a matrix is invertible

- using the determinant for matrices in R2×2

- using facts about rref for matrices in Rn×n

- algebraic properties of matrix inverses with respect to matrix multiplication

- transpose of a matrix

- algebraic properties of transposed matrices with respect to matrix addition, multiplication, and inversion

- matrix rank

- matrices in applications

- solve an equation of the form LU = w

- matrices and systems of states

- interpret partial observations of system states as vectors

- interpret relationships betweem dimensions in a system of states as a matrix

- given a partial description of a system state and a matrix of relationships, find the full description of the system state

- interpret system state transitions/transformations over time as matrices

- population growth/distributions over time

- compute the system state after a specifieds amount of time

- find the fixed point of a transition matrix

Below is a comprehensive collection of review problems going over the course material covered until this point. These problems are an

accurate representation of the kinds of problems you may see on an exam.

Example: Find any h ∈ R such that the following two vectors are linearly independent.

There are many ways to solve this problem. One way is to use the definition of linear dependence and find an h that does not satisfy it.

Then, we have that any h such that h ≠ -8 is sufficient to contradict linear dependence (and, thus, imply linear independence):

Another solution is to recall that orthogonality implies linear independence. Thus, it is sufficient to find h such that

the two vectors are orthogonal.

This implies h = 100.

| 5(20) + (-5)(-20) + (-2)h | |

| = | | | = | |

Example: Suppose we have a matrix M such that the following three equations are true:

Compute the following:

We should recall that multiplying a matrix by a canonical unit vector with 1 in the ith row in the vector gives us the ith column of the matrix.

Thus, we can immediately infer that:

Thus, we have:

| = | | | | | | (3,3,-3) ⋅ (2,1,-1) | | (-2,0,1) ⋅ (2,1,-1) |

| |

| | |

| = | |

Example: List at least three properties of the following matrix:

| |

The matrix has many properties, such as:

- it is an elementary matrix

- it is an invertible matrix

- it is an orthogonal matrix

- it is a symmetric matrix

- it has rank n

- its reduced row echelon form is the identity

Example: Find the matrix B ∈ R2×2 that is symmetric, has a constant diagonal

(i.e., all entries on the diagonal are the same real number), and satisfies the following

equation:

We know that B is symmetric and has a constant diagonal, so we need to solve for a and b in:

Example: Compute the inverse of the following matrix:

| |

One approach is to set up the following equation and solve for a,b,c, and d:

Another approach is to apply the formula for the inverse of a matrix in R2×2:

Example: Let a ∈ R be such that a ≠ 0. Compute the inverse of the following matrix:

| |

As in the previous problem, we can either solve an equation or apply the formula:

Example: Suppose x ∈ R is such that x ≠ 0. Compute the inverse of the following matrix:

| |

Because we have an upper triangular matrix, computing the inverse by solving the following equation is fairly efficient. Start by considering

the bottom row and its product with each of the columns. This will generate the values for g, h, and i. You can then proceed to the other

rows.

The solution is:

| | | | 1/x | 0 | 1/(2x) | | 0 | 1/x | 0 | | 0 | 0 | 1/4x |

| |

|

Example: Assume a matrix M ∈ R2×2 is symmetric, has constant diagonal, and all its entries are nonzero. Show that A cannot

be an orthogonal matrix.

Let a ≠ 0 and b ≠ 0, and let us define:

Suppose that M is an orthogonal matrix; then, we have that:

Thus, we have ba + ab = 0, so 2ab = 0. This implies that either a = 0 or b = 0. But this contradicts the assumptions, so M cannot be

orthogonal.

Example: Suppose the Earth is located at the origin and your spaceship is in space at the location corresponding to the vector

[ -5 ; 4; 2 ]. Earth is sending transmissions along the vector [ √(3)/3 ; √(3)/3 ; √(3)/3 ]. What is the shortest distance

your spaceship must travel in order to hear a transmission from Earth?

By the triangle inequality, for any vector v ∈ R3, the closest point to v on a line is the orthogonal projection

of v onto that line. We need to find the distance from the spaceship's current position to that point.

We first compute the orthogonal projection of [ -5 ; 4; 2 ] onto the line L

defined as follows:

We can compute the orthogonal projection using the formula for an orthogonal projection of one vector onto another.

Notice that the vector specifying the direction of transmission is already a unit vector:

Now, we must compute the distance between the destination above and the spaceship's current position:

| = | | | = | | √((16/3)2 + (-11/3)2 + (-5/3)2) |

| | = | | | = | |

Thus, the shortest distance is √(402)/3.

Example: Two communication towers located at v ∈ R2 and u ∈ R2 are sending directed signals to each other (it is only possible to hear

the signal when in its direct path). You are at the origin and want to travel the shortest possible distance to

intercept their signals. What vector specifies the distance and direction you must travel to intercept their signals?

We can solve this problem by recognizing that the closest point to the origin on the line along which the signals can be intercepted is the vector that is orthogonal to the line (i.e., it is the orthogonal projection of the origin onto the

line). The definition of the line is:

| = | | { a ⋅ (u - v) + v | a ∈ R}

|

|

Thus, the point p ∈ R2 to which we want to travel must be both on the line and orthogonal to the

line (i.e., its slope must be orthogonal to any vector that represents the slope of the line, such as u - v). In other words, it must satisfy the following two equations:

Thus, it is sufficient to solve the above system of equations for p.

4. Vector Spaces

We are interested in studying sets of vectors because they can be used to model sets of system states, observations

and data that might be obtained about systems, geometric shapes and regions, and so on. We can then represent real-world problems

(e.g., given some observations, what is the actual system state) as equations of the form M ⋅ v = w, and sets of vectors as

the collections of possible solutions to those equations. But what is exactly the set of possible solutions to M ⋅ v = w?

Can we characterize it precisely? Can we define a succinct notation for it? Can we say anything about it beyond simply solving the equation?

Does this tell us anything about our system?

In some cases, it may make more sense to consider only a finite set of system states, or an infinite set of discrete states (i.e., only

vectors that contain integer components); for example, this occurs if vectors are used to represent the number of atoms, molecules, cows, chickens,

power plants, single family homes, and so on. However, in this course, we make the assumption that our our sets

of system states (our models of systems) are infinite and continuous (i.e., not finite and not discrete); in this context, this simply

means that the entries in the vectors we use to represent system states are real numbers.

Notice that the assumption of continuity means that, for example, if we are looking for a particular state (e.g., a state corresponding

to some set of observations), we allow the possibility that the state we find will not correspond exactly to a state that "makes sense". Consider

the example problem involving the barn of cows and chickens. Suppose we observe 4 heads and 9 legs. We use the matrix that

represents the relationships between the various dimensions of the system to find the number of cows and chickens:

Notice that the solution above is not an integer solution; yet, it is a solution to the equation we introduced because the set of system states

we are allowing in our solution space (the model of the system) contains all vectors in R2, not just those with integer entries.

As we did with vectors and matrices, we introduce a succinct language (consisting of symbols, operators, predicates, terms, and formulas) for

infinite, continuous sets of vectors. And, as with vectors and matrices, we study the algebraic laws that govern these symbolic expressions.

4.1. Sets of vectors and their notation

We will consider three kinds of sets of vectors in this course; they are listed in the table below.

| kind of set (of vectors) |

maximum

cardinality

("quantity of

elements") |

solution space of a... |

examples |

| finite set of vectors |

finite |

|

- {(0,0)}

- {(2,3),(4,5),(0,1)}

|

| vector space |

infinite |

homogeneous system of

linear equations:

M ⋅ v = 0 |

- {(0,0)}

- R

- R2

- span{(1,2),(2,3),(0,1)}

- any point, line, or plane

intersecting the origin

|

| affine space |

infinite |

nonhomogeneous

system of

linear equations:

M ⋅ v = w |

- { a + v | v ∈ V} where

V is a vector space and a is a vector

- any point, line, or plane

|

To represent finite sets of vectors symbolically, we adopt the convention of simply listing the vectors between a pair of braces (as with any

set of objects). However, we need a different convention for symbolically representing vector spaces and affine spaces. This is because we

must use a symbol of finite size to represent a vector space or affine space that may contain an infinite number of vectors.

If the solution spaces to equations of the form M ⋅ v = w are infinite, continuous sets of vectors, in what way can be characterize them?

Suppose that M ∈ R2×2 and that is M invertible. Then we have that:

| = | | | = | | | = | | | = | | | = | | | = | | (s/(ad−bc)) ⋅ | | + (t/(ad−bc)) ⋅ | |

|

|

Notice that the set of possible solutions v is a linear combination of two vectors in R2. In fact, if a collection of solutions

(i.e., vectors) to the equation M ⋅ v = 0 exists, it must be a set of linear combinations (in the more general case of

M ⋅ v = w, it is a set of linear combinations with some specified offset). Thus, we introduce a succinct notation

for a collection of linear combinations of vectors.

Definition: A span of a set of vectors { v1, ..., vn } is the set of all linear combinations of vectors in { v1, ..., vn }:

| span { v1, ..., vn } = { a1 ⋅ v1 + ... + an ⋅ vn | a1 ∈ R, ..., an ∈ R }

|

|

Example: For each of the following spans, expand the notation into the equivalent set comprehension and determine if the set

of vectors is a point, a line, a plane, or a three-dimensional space.

- The set defined by:

The above span is a line.

- The set defined by:

The above span is a line.

- The set defined by:

The above span is a plane.

- The set defined by:

The above span is a set containing a single point (the origin).

- The set defined by:

| = | | {a ⋅ | | + b ⋅ | | + c ⋅ | | | a,b,c ∈ R}

| |

| = | |

The above span is a three-dimensional space.

Example: Using span notation, describe the set of vectors that are orthogonal to the vector [ 1 ; 2 ] ∈ R2.

We know that the set of vectors orthogonal to [ 1 ; 2 ] can be defined as the line L where:

It suffices to find a vector on the the line L. The equation for the line is:

We can choose any point on the line y = (-1/2) x; for example, [ 2 ; -1 ]. Then, we have that:

Example: Using span notation, describe the set of solutions to the following matrix equation:

Notice that the above equation implies two equations:

In fact, the second equation provides no additional information because 2 ⋅ [ 1 ; 2 ] = [ 2 ; 4 ]. Thus, the solution space

is the set of vectors:

We can now use our solution to the previous problem to find a span notation for the solution space:

Example: Suppose also that system states are described by vectors v ∈ R3 with the following units for each dimension:

| = | | | | | | x # carbon atoms | | y # hydrogen atoms | | z # oxygen atoms |

| |

|

|

|

Individual molecules are characterized using the following vectors:

| C3H8: | | , O2: | | , CO2: | | , H2O: | |

|

|

-

Suppose that a mixture contains only molecules of water (H2O) and carbon dioxide (CO2).

Using the span notation, specify the possible set of system states that satisfy these criteria.

The possible set of system states for the mixture is: